I Spent 53 Hours and 18 Minutes in Claude Cowork on One Project. Here's What I Learned.

3 things I got wrong, 4 things that saved me, and why your CLAUDE.md file matters more than your prompts.

Yesterday I submitted a deliverable for a consulting engagement I’ve been doing.

A 37-slide presentation and 16 spreadsheets covering recommendations, calculations, and restructuring scenarios.

I built all of it with Claude Cowork.

This project was a powerful example of what is possible now. I wrote about that here: how I did three weeks of work in a single afternoon.

In six weeks, working part-time, I put together a report that would have taken a consulting team months pre-AI.

But this isn’t a hype-bro article about how amazing AI is.

Because if you’re not careful, Claude has some default behaviors that can lead to overengineering, misaligned analysis, and unnecessary rework.

I know because I had all three in heaps on this project.

So, I asked Claude to help me figure out why some of those problems kept happening. I wanted to understand its behavior so I could learn from it.

Here’s what I got back.

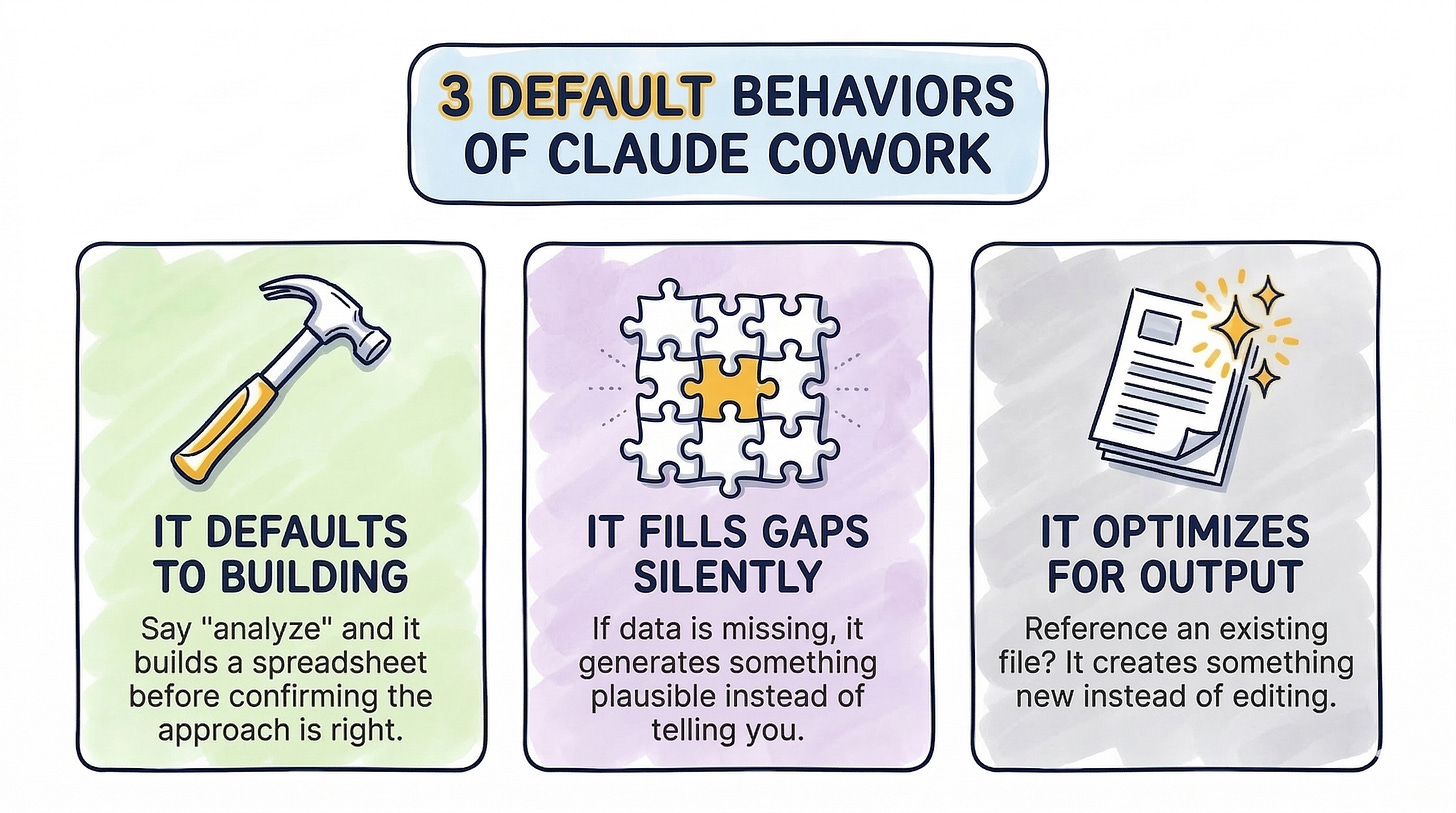

How Claude Cowork actually behaves (and why it matters)

It defaults to building.

When you say “analyze this,” Claude’s strongest instinct is to produce something. A workbook. A formatted document.

It’ll build a beautiful spreadsheet with color-coded headers before either of you has confirmed the analytical approach is sound.

It fills gaps without telling you.

This is the most dangerous one. When Claude hits a data gap, it doesn’t stop and say “I don’t have this.” It generates something plausible.

If a table needs five rows and the data only supports four, it writes a fifth. One wrong number in consulting work erodes trust in everything.

It interprets your instructions through the lens of “what can I produce.”

When you reference an existing file, Claude’s default is to create something new inspired by it, not to open the file and make changes. Even when you think you’re being clear.

Understanding these three things would have changed how I worked from day one.

3 things I got wrong

1. I didn’t go deep enough on the analysis.

This was a massive project. The office I was working with spends $20 million a year just on staffing. I was looking at efficiencies, affordability, restructuring.

Way more than one person would normally take on.

And some of the analysis was in technical areas where I don’t have direct experience.

I knew I could do it with Claude’s help, and when the output came back looking thorough and well-structured, I’d do a spot check and then and move on.

The problem is that in the areas I knew well, I could spot mistakes quickly. In the areas I didn’t, everything looked right because I didn’t know what wrong looked like.

The day before submitting my report, I spotted a number that looked too low.

When I dug in, I found out we didn’t actually have the data we needed to do the analysis properly. The whole methodology was circular. It had been sitting in the project for weeks because the output looked polished and complete.

I’ve written before about treating AI like a graduate assistant. This is where that breaks down.

A graduate assistant who hands you something impressive-looking in an area you’re not an expert in is dangerous if you don’t know the right questions to ask.

The fix I landed on was adding this rule to my CLAUDE.md file (more on that in a minute):

“Before building any deliverable or analysis, present the approach: what question are we answering, what data do we need, what methodology, what assumptions, what gaps. Get approval before producing.”

I have no idea if that will be enough. The best fix is probably to ask a different question.

Instead of telling Claude “analyze this” (where Claude will assume “based on what we have and what we have is sufficient) ask it “do we have what we need to do this analysis?”

That’s a subtle difference, but it’s a big one.

2. I let Claude overengineer everything.

Remember what I said about Claude defaulting to building?

I’d given it all these powerful analytical capabilities, skills for creating spreadsheets, data analysis tools, the whole kit.

And without limits, it just goes to town.

Early in the project, Claude built me analyses with sensitivity tables, multi-tab models, and interactive dashboards. I thought it was impressive.

Then I started thinking about how I’d actually hand this to the client.

Could they use it? Would they understand it? If they had questions, could I explain and defend every number in a nine-tab, 4,000-formula spreadsheet?

No. I couldn’t.

I simplified to a standard format, but even that was overkill for the simpler recommendations that only needed a paragraph and a few numbers. Because I told Cluade that standard format was our…well, standard…it gave me every recommendation in that format, whether it needed it or not.

Claude isn’t doing this because it thinks complex is better.

It’s doing what it’s designed to do: maximum analysis. If you say “analyze” without giving it parameters, it’s going to go as deep and wide as it can.

The fix: before every analysis, ask “what’s the simplest version of this that would be useful?”

3. I wasn’t explicit enough, even when I thought I was.

Multiple times during the project, I had a working file and wanted Claude to update it.

Merge two presentations. Fix numbers in a spreadsheet. Add content to an existing deck.

Sometimes Claude would do exactly what I asked. Other times it rebuilt from scratch. I’d have to go back and course-correct to what we had previously.

Idiot me only thought to ask why at the end of the project.

And I still don’t fully know what caused it sometimes and not others. I think in many cases I did tell it to edit the file.

But with so much going on (multiple sessions, dozens of files, constant context-switching between different parts of the work), my instructions probably weren’t as precise as I thought they were.

This was also a new way of working for me. I’ve always worked linearly. One thing, then the next. This project was the first time I ran multiple sessions at once, cycling between different parts of the work. That’s powerful, but it means your instructions need to be even more precise than you think.

The fix: be painfully specific. Not “combine these two presentations.” Instead: “Open this file. Add slides 4-8 from that file after slide 12. Update the number on slide 3 to $X.” Claude Cowork works with your actual files. Use that.

4 things that saved me

1. I separated research from production.

I didn’t get all the data, the transcripts, the files, and just tell Claude to build me a final report. That’s the rookie move. (I’ve written about why.)

The first three weeks were discovery. Reading transcripts, having calls with the client, scanning documents, and building inventories. No final deliverables.

Once I had a sense of how I wanted to structure the final report, I had Claude create a clean file structure and bring everything from our previous working folders into one place so I could fact-check it.

We went step-by-step. Never jumping ahead.

2. I built a CLAUDE.md file and kept it updated.

This is the single most useful thing I did.

A CLAUDE.md is a markdown file that Claude reads at the start of every session. It holds project scope, source file locations, verified data, rules for how to work together, and a session startup checklist.

I didn’t write most of it myself.

I told Claude to create it and it figured out what should go there. At various points I told it to build reference docs, indexes, and inventories so it knew where things were.

Then I’d tell it to update the CLAUDE.md so it would have that context next session.

Every session started with that shared context instead of starting from zero. If you do nothing else from this list, do this. (Here’s how I set mine up.)

3. I turned every failure into a permanent rule.

When Claude pulled wrong numbers from memory instead of reading the source file, that became a data accuracy rule.

When it rebuilt files from scratch instead of editing, that became “edit existing files, don’t start fresh unless I say so.”

When it filled in data gaps with plausible guesses, that became “if you can’t verify a number, say so.”

Each failure got written into the CLAUDE.md as a permanent instruction.

Not a one-time correction. A systemic change.

The project got smoother as the rules built up, because each session inherited every lesson from the ones before it.

4. I caught every error before it reached the client.

I spot-checked every formula. Verified every number against the source files. Reviewed every slide.

The same stuff you’d do if you were a senior consultant with a junior team doing the work.

Building the formulas and the deliverables? That’s Claude’s job.

Auditing them? That’s mine.

I also had Claude continuously audit its own work, which surfaced a lot of mistakes along the way.

Errors happened. Some were subtle (a formula that netted surpluses against deficits instead of summing gaps). Some were obvious (a number that didn’t match the source).

None of them shipped.

That’s the job.

You are the quality gate. Not Claude.

The real takeaway

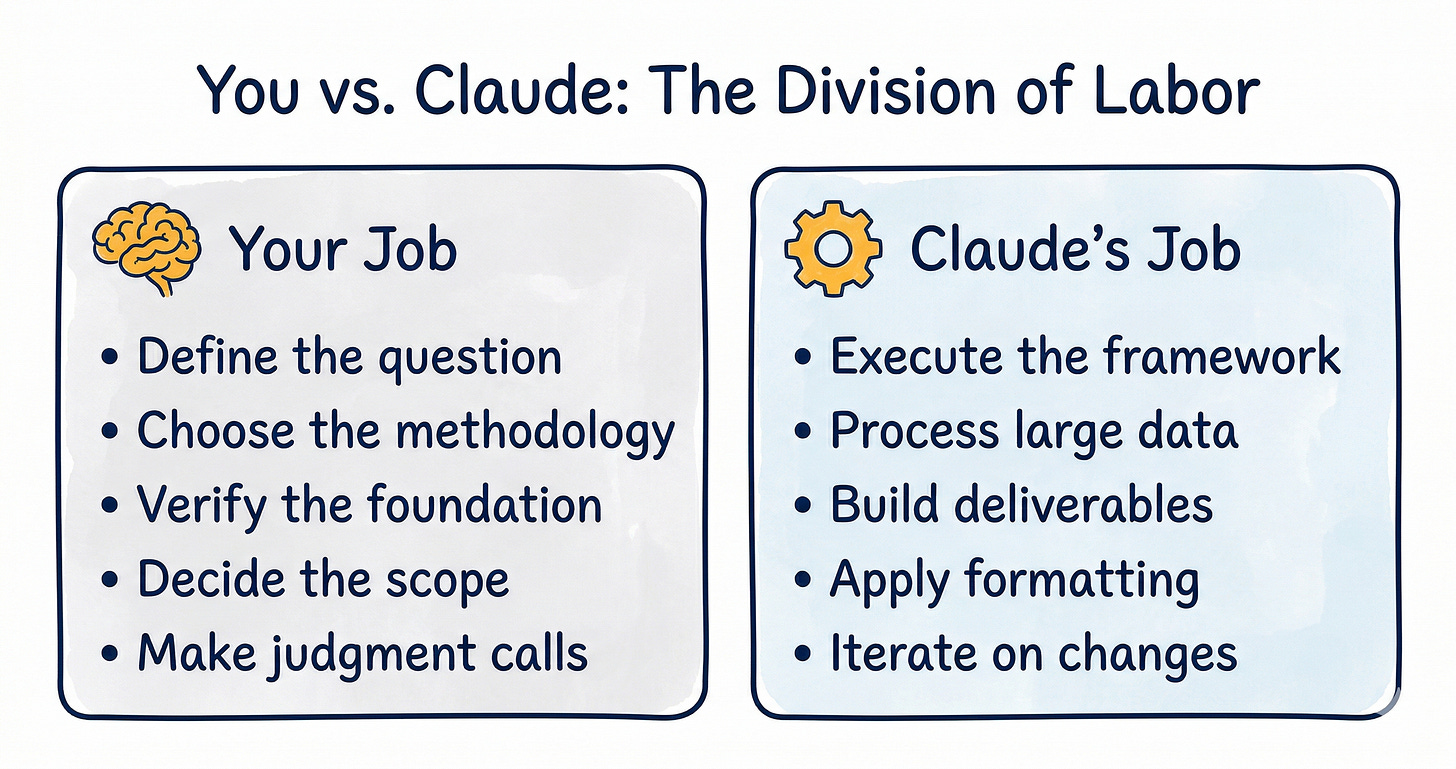

The project worked because I figured out the division of labor.

My job: define the question. Choose the methodology. Verify the foundation. Decide the scope. Make judgment calls about what matters.

Claude’s job: execute within a defined framework. Process large volumes of data. Build formatted deliverables from verified analysis. Iterate quickly on specific, well-scoped changes.

The project drifted when I was implicitly asking Claude to do my job (choose the methodology, decide what mattered, fill gaps creatively) and when I was doing Claude’s job (reformatting slides, fixing formatting cell by cell).

Get that division right from session one, and you’ll save yourself a lot of the pain I went through over six weeks.

Most definitely we cannot let a new truck leave the production line without passing through the quality control department, and that is one of the many important hats a consultant has to wear as we all learn to work with AI.

Thank you for writing this tutorial on all that you learned through this amazing project. And I like the way you highlighted that in some spots you knew if the results were right or wrong, but in some you did not because you were not familiar with the work. Personally, that would have made me nervous, but you got it all sorted out and delivered to the client. Hope they are a repeat client for you.